I made another thing: Float Explorer!

What does it do?

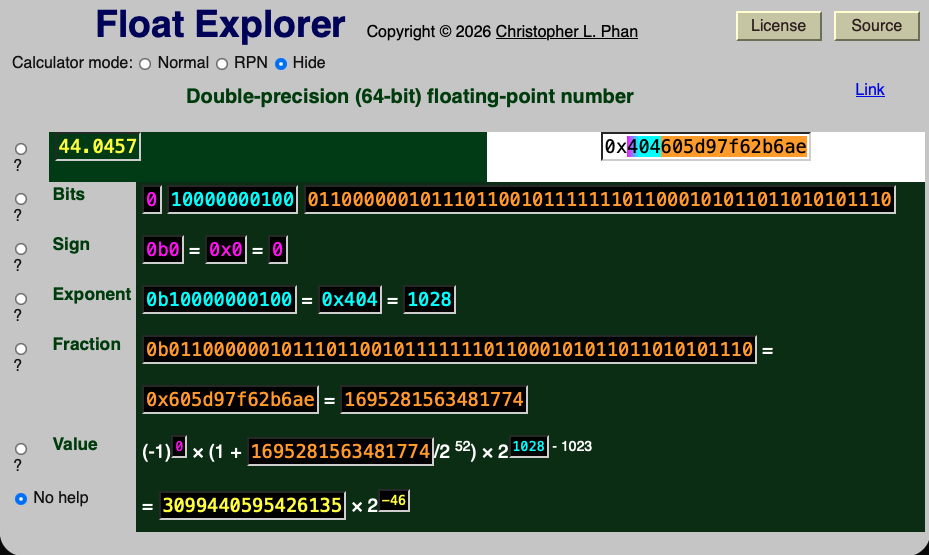

This is a simple web app that shows you how a floating-point number is stored in

your computer's memory. For example, consider the

number 44.0457.1

In your computer, this number is represented as

a double-precision (64-bit)

floating-point

number as

\[ (-1)^{0} \times \left( 1 + \frac{1695281563481774}{2^{52}} \right) \times 2^{1028 - 1023}.\]

The three numbers 0, 1028, and 1695281563481774 are stored in binary as 0b0,

0b10000000100, and 0b0110000001011101100101111111011000101011011010101110,

respectively. Hence, the entire number is represented in binary as

0b0100000001000110000001011101100101111111011000101011011010101110, or in

hexadecimal as 0x404605d97f62b6ae. (The convention is to prefix hexadecimal

numbers with 0x

and binary numbers with 0b

.)

It also has a calculator feature, so you can look at how floating-point arithmetic handles calculations such as 0.4 × 3.

Double-precision floating-point numbers

Every double-precision floating-point number (other than ±0, ±∞, or NaN)2 is represented as \[ (-1)^{s} \times \left( 1 + \frac{f}{2^{52}} \right) \times 2^{e - 1023} \hspace 10em \text{(1)}\] where the sign part s, the exponent part e, and the fraction part f are nonnegative integers that are stored in one, 11, and 52 bits, respectively (for a total of 64 bits). Any expression written this way can also be written in the form a × 2b, where a is an odd integer and b is an integer. Float Explorer will give you this form as well; for example, 44.0457 ≈ 3099440595426135 × 2-46.

It's like scientific notation

Here's another way to think about the form given in expression (1): The number 44.0457 can be written in scientific notation as 4.40457 × 101. In general, we write a number3 in scientific notation as (-1)s × d0.d1d2…dn × 10p, where each digit dj has 0 ≤ dj < 10 and d0 ≠ 0. We understand the decimal notation d0.d1d2…dn to be a notation for \[ \sum_{j = 0}^n \frac{d_j}{10^j}.\]

Likewise, a number4 could be written in a binary form (-1)s × 0bb0.b1b2…bn × 2p, where each digit bj has 0 ≤ bj < 2 (in other words, bj = 0 or bj = 1) and b0 ≠ 0. This second condition means that b0 = 1 always. We understand the binary notation 0b1.b1b2…bn to be shorthand for \[ 1 + \sum_{j = 1}^n \frac{b_j}{2^j}.\]

Suppose that we have n = 52 (in other words, there are 52 digits right of the binary point in the binary expansion of our number). Then, \[\begin{aligned} (-1)^s \times \text{0b}1.{b_1}{b_2}{b_3}\dots b_{52} \times 2^p &= (-1)^s \times \left( 1 + \sum_{j = 1}^{52} \frac{b_j}{2^j} \right) \times 2^p \\ &= (-1)^s \times \left( 1 + \frac{\sum_{j = 1}^{52} b_j\times 2^{52-j}}{2^{52}} \right) \times 2^{(p + 1023) - 1023}\\ &= (-1)^s \times \left( 1 + \frac{f}{2^{52}} \right) \times 2^{e - 1023}, \end{aligned}\] where \[f = \sum_{j = 1}^{52} b_j \times 2^{52 - j}\] and e = p + 1023, the form in expression (1).

Sadly, not every real number, or even rational number, can written exactly in this form. For most numbers, the best we can do is approximate. It's because of these approximations that we end up with rounding errors.

An example of rounding error

Suppose we open up a Python REPL and do the following:

a = 0.4

a

0.4

3 * a

1.2000000000000002

Shouldn't this be 1.2? Let's keep going:

3 * a - 1.2

2.220446049250313e-16

import math

math.log2(3 * a - 1.2) # log base 2

-52.0

It appears that 1.2 and the computer's calculation of 3 × 0.4 are exactly 2-52 apart.

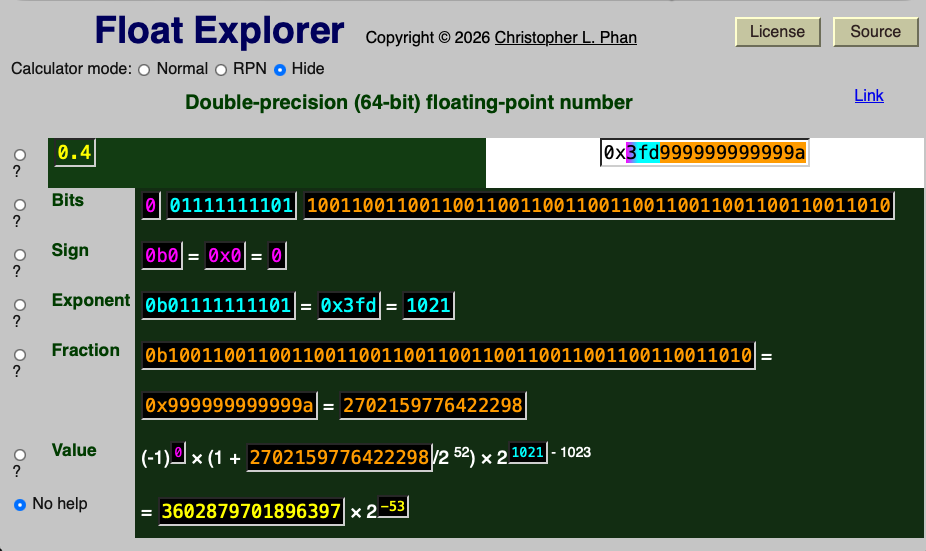

How is 0.4 represented?

Plugging 0.4 into Float Explorer, you see that the fraction part will be represented by 1001 repeated 12 times, followed by 1010. In hexadecimal5, the fractional part is 0x999999999999a.

To understand what's going on better, consider this line of reasoning:

Note 1/3 = 0.3333.

Therefore, 1/3 × 3 = 0.9999.

Of course, 1/3 is not actually equal to 0.3333, but rather 0.3333…. There is a small rounding error that makes the end result off by 10-4.

Why is 1/3 = 0.3333…? If you ask a mathematician (such as myself), the answer you might get is that \[\begin{aligned} 0.3333\dots &= \sum_{j = 1}^{\infty} \frac{3}{10^j} \\ &= \frac{3}{10} \sum_{j = 0}^{\infty} \left(\frac{1}{10}\right)^j \\ &= \frac{(3/10)}{1 - 1/10} \\ &= \frac{(3/10)}{(9/10)} \\ &= \frac{3}{9} = \frac{1}{3}. \end{aligned}\] People usually first encounter the geometric series in calculus (assuming they learn it at all). However, in elementary school you might have done this calculation:

You recognize that you are in a loop, and that 3s will be added as digits ad infinitum.

Let's use long division to calculate a hexadecimal expansion of 2/5.

You may be tempted to start with 0.4, but we are expanding in hexadecimal, not decimal. The hexadecimal value 0x20 is equal to 32, and 5 × 6 + 2 = 32. In hexadecimal, the decimal calculation 32 - 30 = 2 is written 0x20 - 0x1e = 0x02.

Much like in the case of the decimal expansion of 1/3, you now recognize that we are in a loop, where additional digits of the hexadecimal expansion will be 6.

So, the hexadecimal expansion of 2/5 is 0.666…. We can also verify this using a geometric series:

\[\begin{aligned} \text{0x}0.666\dots &= \sum_{j = 1}^\infty \frac{6}{16^k} \\ &= \frac{6}{16} \sum_{j = 0}^\infty \left(\frac{1}{16}\right)^k \\ &= \frac{(3/8)}{1 - 1/16} \\ &= \frac{(3/8)}{(15/16)} \\ &= \frac{2}{5}. \end{aligned} \]

Now, it's straightforward convert between hexadecimal and binary, since each hexadecimal digit corresponds exactly to four binary digits. Specifically 0x6 is equal to 0b0110. Therefore, \[\begin{aligned} \frac{2}{5} & = \text{0x}0.666\dots \\ &= \text{0b}0.0110011001100110\dots \\ &= \text{0b}1.10011001100110011\dots \times 2^{-2} \\ &= (-1)^{0} \times \left(1 + \text{0b}0.10011001\dots \right) \times 2^{-2} \\ &= (-1)^{0} \times \left(1 + \text{0x}0.999\dots \right) \times 2^{-2} \\ &= (-1)^{0} \times \left(1 + \frac{\text{0x}0.999\dots \times 2^{52}}{2^{52}}\right) \times 2^{-2} \\ &= (-1)^{0} \left(1 + \frac{\text{0x}9999999999999.9999\dots}{2^{52}}\right) \times 2^{1021-1023}, \end{aligned}\] since 0b1001 = 9 = 0x9. Therefore, to get something in the form \[(-1)^{s} \times \left(1 + \frac{f}{2^{52}} \right) \times 2^{e-1023}\] we round 0x9999999999999.999… (that's 13 nines in front of the hexadecimal point) to 0x999999999999a, getting \[0.4 = (-1)^{0} \times \left(1 + \frac{\text{0x}999999999999a}{2^{52}} \right) \times 2^{1021 - 1023}.\]

Multiplication rounding errors

Essentially, 0.4 is represented as \[ 0.4 \approx (-1)^0 \times \text{0b}1.1001100110011001100110011001100110011001100110011010 \times 2^{-2}.\] (That's 1001 repeated 12 times followed by 1010.) Likewise, 3 is represented as \[3 = (-1)^0 \times \text{0b}1.1000000000000000000000000000000000000000000000000000 \times 2^1. \] Therefore, to calculate 0.4 × 3, the computer calculates \[\hat q = \text{0b}1.1001100110011001100110011001100110011001100110011010 \times \text{0b}1.1,\] and then returns p = (-1)0 + 0 × q × 21-2, where q is q̂ rounded to 52 bits right of the binary point. Here is the calculation in binary of q̂ and q:

Hence, the approximation p for 0.4 × 3 is \[\begin{aligned} p &= (-1)^{0 + 0} \times q \times 2^{1-2} \\ &= (-1)^0 \times q \times 2^{-1} \\ &= (-1)^0 \times \text{0b}10.011001100110011001100110011001100110011001100110100 \times 2^{-1} \\ &= (-1)^0 \times \text{0b}1.0011001100110011001100110011001100110011001100110100 \times 2^{0} \\ &= (-1)^0 \times \left(1 + \frac{\text{0b}11001100110011001100110011001100110011001100110100}{2^{52}} \right) \times 2^{0} \\ &= (-1)^0 \times \left(1 + \frac{\text{0x}3333333333334}{2^{52}}\right) \times 2^{1023 - 1023} \end{aligned} \]

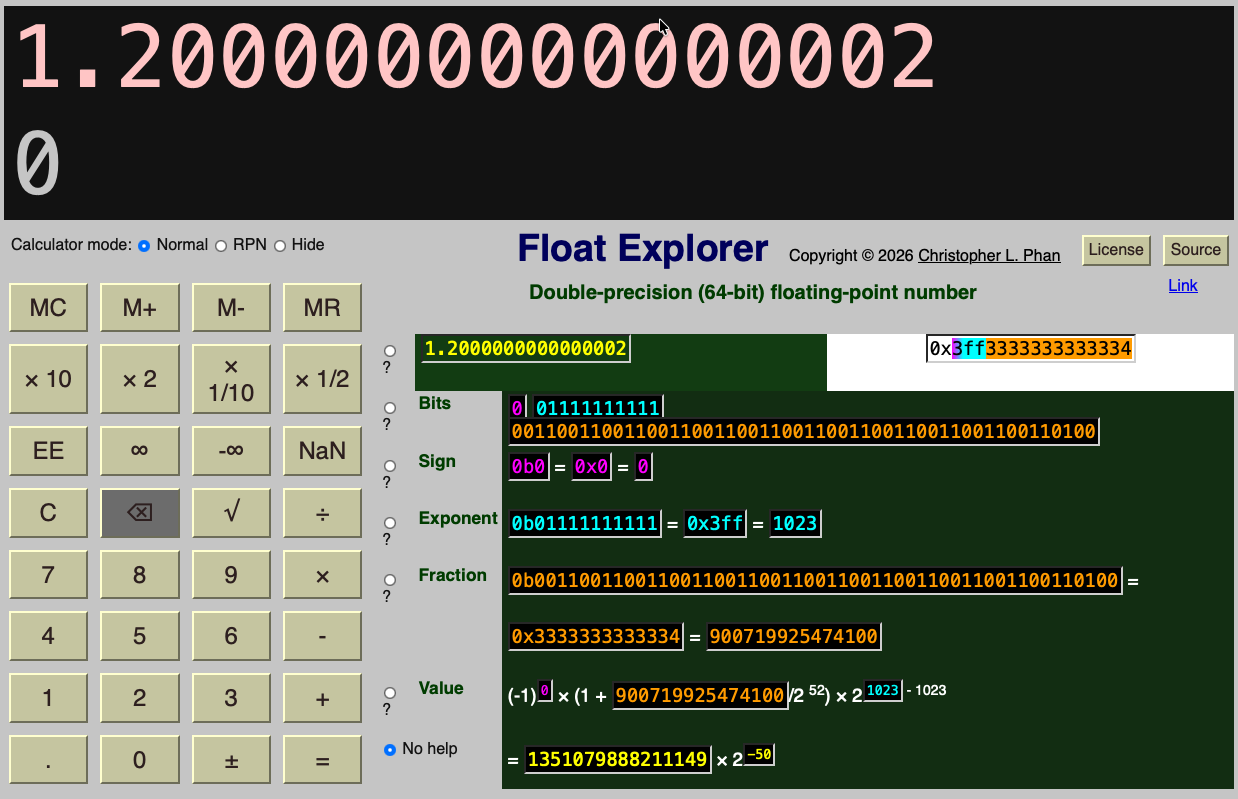

Put Float Explorer into Normal mode, then enter the following keystrokes: 0 . 4 × 3 =. The result will be 1.2000000000000002, with a sign of s = 0, exponent of e = 1023, and fraction of f = 0x3333333333334, which matches what we did by hand above.

Normalcalculator mode of Float Explorer, we calculate 0.4 × 3 ≈ 1.2000000000000002.

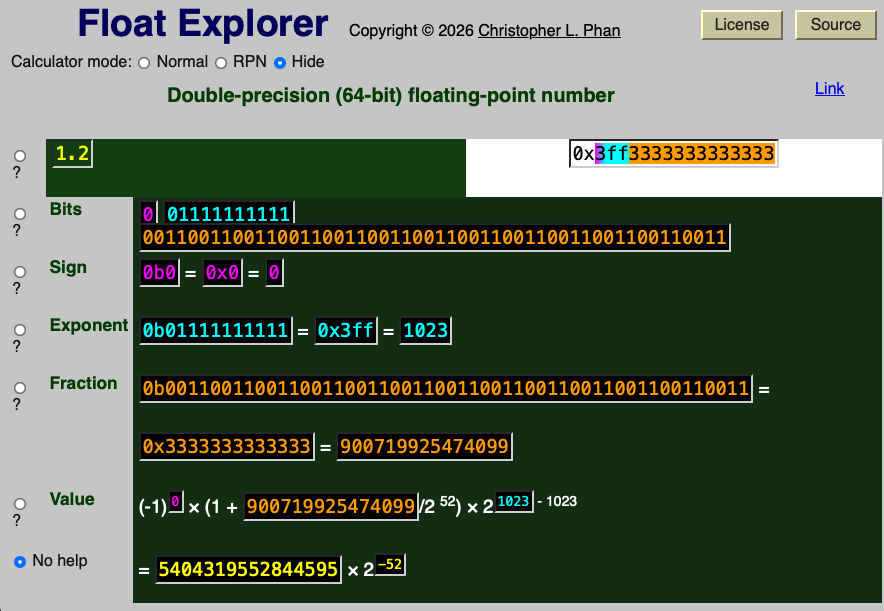

On the other hand, if we use Float Explorer to examine the correct value of 0.4 × 3 = 1.2, we get with a sign of s = 0, exponent of e = 1023, and fraction of f = 0x3333333333333. Note that the difference in last digit between the fraction parts.

My hope

My hope is that this will be helpful to someone trying to make sense of floating-point numbers and the weird rounding errors that can occur with floating-point arithmetic.

-

The University of Oregon's Fenton Hall (in Eugene, Oregon) and the house where I lived from summer 2013 until summer 2024 (in Winona, Minnesota) are both at a latitude of 44.0457° N, despite being nearly 2500 km apart. ↩

-

IEEE floating-point formats make a distinction between negative and positive zero. For example, under floating-point arithmetic, \(1 / \infty = 0\) while \(1 / (-\infty) = -0\). ↩

-

Specifically, to be able to express a real number r exactly in (decimal) scientific notation, we must have r = p/q for some relatively prime integers p and q where q > 0 and q divides some power of 10. All other real numbers will be approximated to n decimal places. ↩

-

A similar caveat applies here as in footnote 3, except the denominator must divide (and hence be) a power of 2 instead of 10. ↩

-

Numbers in hexadecimal, or base 16, are typically written with the familiar digits 0–9 as well as the letters a–f. To distinguish from decimal numbers, the prefix

0x

is often added to hexadecimal numbers. Counting in hexadecimal, the first 33 nonnegative integers are 0x0, 0x1, 0x2, 0x3, 0x4, 0x5, 0x6, 0x7, 0x8, 0x9, 0xa, 0xb, 0xc, 0xd, 0xe, 0xf, 0x10, 0x11, 0x12, 0x13, 0x14, 0x15, 0x16, 0x17, 0x18, 0x19, 0x1a, 0x1b, 0x1c, 0x1d, 0x1e, 0x1f, and 0x20. ↩